Modern enterprises struggle with a critical bottleneck: the time between asking a business question and receiving an actionable answer. AI data visualization refers to the use of artificial intelligence to automatically analyze, generate, and interpret visuals from complex datasets, empowering faster, data-driven decision-making. When integrated with Snowflake's cloud data platform, these capabilities address the reality that 61% of decision-making time is considered ineffective in Fortune 500 firms—costing 530,000 manager-days annually. By combining AI data insights with enterprise data visualization platforms, organizations can transform siloed data into interactive business intelligence tools that accelerate strategic responses and competitive positioning.

Introduction to AI Data Visualization for Enterprise Decisions

The demand for optimized analytics has never been higher. Enterprise leaders face mounting pressure to extract insights from increasingly complex data ecosystems while empowering non-technical teams to make informed decisions independently. Traditional business intelligence workflows create delays that compound across departments, slowing market response and limiting innovation velocity.

Snowflake has emerged as a critical platform in this evolution, providing a unified architecture that supports both structured and unstructured data at scale. The platform's native AI capabilities eliminate the technical friction that historically separated data storage from insight generation. Organizations can now deploy AI-driven analytics within Snowflake to cut analytics latency by up to 50% for Fortune 500 manufacturers, directly addressing the inefficiencies that plague enterprise decision-making.

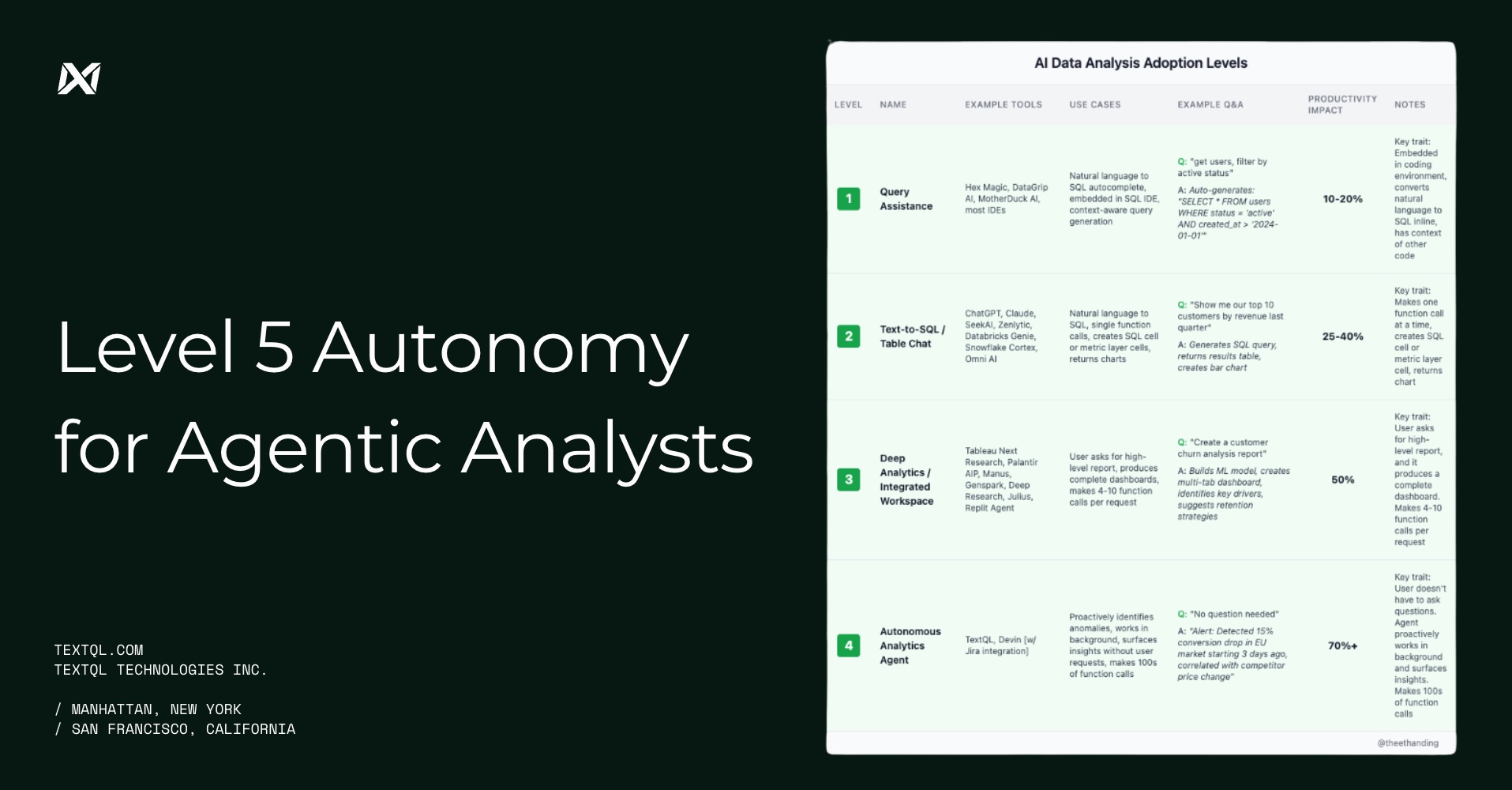

The shift toward AI-powered visualization represents more than incremental improvement. It fundamentally changes who can access insights, how quickly those insights surface, and the sophistication of questions that business users can answer without specialized technical support.

How Snowflake's AI Capabilities Enhance Decision Speed

Analytics latency—the delay between posing a data question and receiving an actionable answer—represents one of the most expensive hidden costs in enterprise operations. Snowflake's AI-driven features directly target this bottleneck through multiple mechanisms that reduce friction at every stage of the insight generation process.

The platform's support for natural language queries transforms the user experience for business analysts and executives. Instead of waiting for data teams to translate requests into SQL, users can ask questions in plain language and receive instant visualizations. Text-to-SQL prompts and embedded AI agents enable business users to extract insights without technical intervention, democratizing access to sophisticated analytics capabilities.

Traditional BI workflows typically require multiple steps: data extraction, transformation, visualization design, and interpretation. AI-powered workflows in Snowflake collapse these stages into a single, conversational interaction. The platform's AI agents detect up to 95% of query errors in real time, preventing wasted cycles and ensuring that users receive accurate results on the first attempt.

The speed advantage compounds when organizations deploy AI across multiple use cases. Marketing teams can analyze campaign performance, finance can model scenarios, and operations can monitor supply chain metrics—all without competing for limited data engineering resources. This parallel processing of business questions represents a fundamental shift in how enterprises operate at scale.

Implementing Golden Datasets for Optimized AI Insights

The quality of AI-generated insights depends directly on the quality of underlying data. Golden datasets are curated collections optimized for AI and GenAI use cases, tailored to both structured and unstructured data requirements. These datasets serve as the foundation for reliable, repeatable analytics that business users can trust.

Creating golden datasets requires a systematic approach to data preparation. Organizations must first identify their most critical business questions and the data sources required to answer them. Data cleansing removes duplicates, corrects errors, and standardizes formats across disparate systems. Standardization ensures that metrics like "customer lifetime value" or "conversion rate" mean the same thing across departments, eliminating confusion and misinterpretation.

Continuous enrichment keeps golden datasets current and relevant. As new data sources come online or business priorities shift, the curation process adapts to incorporate fresh signals while maintaining historical consistency. Sigma Computing's experience demonstrates that well-curated datasets reduce manual data prep time by 60-70%, freeing analysts to focus on interpretation rather than wrangling.

Snowflake provides several features that automate or enhance dataset quality. Built-in data quality rules validate incoming data against predefined standards. Version control tracks changes over time, allowing teams to audit how datasets evolve. Metadata management tags datasets with business context, making it easier for AI agents to understand which data sources are authoritative for specific questions.

The investment in golden datasets pays dividends across the entire analytics ecosystem. AI models trained on high-quality data generate more accurate predictions. Visualizations based on trusted datasets carry more weight in executive decision-making. Teams spend less time debating data definitions and more time acting on insights.

Leveraging Natural Language Processing to Democratize Data Access

Natural Language Processing (NLP) in analytics enables users to ask business questions as text and receive instant, conversational insights and visualizations. This capability fundamentally changes who can participate in data-driven decision-making, extending sophisticated analytics beyond the data team to every business function.

The traditional barrier to data access has been technical complexity. SQL queries require specialized knowledge that most business users lack. Dashboard tools offer pre-built visualizations but cannot answer novel questions without developer intervention. NLP agents in Snowflake bridge this gap by interpreting intent, translating natural language into executable queries, and presenting results in intuitive visual formats.

The types of questions that users can answer expand dramatically with natural language interfaces. Traditional dashboards excel at showing what happened—displaying historical trends and performance metrics. NLP-powered systems enable exploratory analysis: "Why did sales decline in the Northeast region last quarter?" or "Which customer segments show the highest propensity to churn?" These questions require dynamic query construction and contextual understanding that static dashboards cannot provide.

Real-time error detection ensures that users receive accurate results even when their questions are ambiguous or contain assumptions that need validation. The system can prompt for clarification, suggest alternative formulations, or automatically correct common mistakes. This guidance helps users refine their analytical thinking while maintaining momentum toward actionable insights.

Organizations that successfully deploy natural language analytics report measurable improvements in data literacy across their workforce. Marketing managers who previously relied on monthly reports now conduct daily performance reviews. Product teams iterate faster by testing hypotheses without waiting for analyst support. The cumulative effect is a more agile, responsive organization that can adapt to market changes with greater speed and confidence.

Integrating Diverse Data Sources Seamlessly with Snowflake

Seamless integration prevents analysis delays and ensures a comprehensive view across business domains—finance, marketing, operations, and customer service. Modern enterprises generate data across dozens or hundreds of systems, each with its own schema, update frequency, and access requirements. Snowflake's architecture streamlines access to siloed or real-time data sources, making it possible to analyze the full business context in a single platform.

Multimodal data ingestion is the process of continuously capturing and unifying structured, semi-structured, and unstructured data streams for AI analysis. Snowflake Openflow enables continuous ingestion and pre-processing, helping overcome challenges linked to traditional AI frameworks that require extensive data engineering before analysis can begin. The platform handles JSON, XML, Parquet, and other formats natively, eliminating the need for complex ETL pipelines.

The integration process follows a logical sequence. First, identify all relevant data sources—cloud applications, on-premise databases, third-party APIs, and streaming feeds. Second, establish secure connections using Snowflake's native connectors or custom integrations. Third, configure ingestion schedules that balance freshness requirements with infrastructure costs. Fourth, implement data quality checks that validate incoming data before it enters the analytics ecosystem.

Real-time data integration unlocks use cases that were previously impractical. Supply chain teams can monitor inventory levels across global warehouses and automatically trigger reorder workflows when thresholds are breached. Marketing can adjust campaign spend based on live conversion data rather than waiting for end-of-day reports. Customer service can access complete interaction histories that span web, mobile, and call center channels.

The unified data model that results from comprehensive integration creates a single source of truth for AI-driven analytics. When all business domains share the same underlying data platform, insights become more reliable and cross-functional collaboration improves. Teams spend less time reconciling conflicting reports and more time aligning on strategic priorities.

Adopting AI-Driven Analytics for Instant and Predictive Insights

AI-driven analytics is the autonomous or semi-autonomous application of statistical models, machine learning, and AI agents to extract insights, predict trends, and recommend actions. Embedding AI and ML directly in Snowflake allows immediate analysis without data egress, reducing costs and complexity while maintaining security and governance controls.

The shift from descriptive to predictive analytics represents a maturity leap for most organizations. Descriptive analytics answers "what happened" by summarizing historical data. Predictive analytics answers "what will happen" by identifying patterns and extrapolating future outcomes. Prescriptive analytics goes further, recommending specific actions to achieve desired results.

AI-powered dashboards generate summaries and narratives for quick data interpretation, eliminating the manual effort required to translate charts into business language. These systems can highlight anomalies, explain contributing factors, and suggest areas for deeper investigation. The narrative layer makes insights accessible to executives who need to understand implications without diving into technical details.

Customer success stories demonstrate the practical impact of AI-driven analytics at scale. Coinbase and IGS Energy built faster, scalable workflows natively in Snowflake, enabling real-time decision-making in highly competitive markets. Coinbase uses predictive models to detect fraudulent transactions before they complete, protecting both the company and its customers. IGS Energy optimizes pricing strategies by forecasting demand patterns and adjusting offers dynamically.

The transition to AI-driven analytics requires careful planning. Organizations should start with high-value use cases where predictions directly influence business outcomes—customer churn prevention, inventory optimization, or dynamic pricing. Build initial models using historical data, validate accuracy against known outcomes, and gradually expand to more complex scenarios. Monitor model performance continuously and retrain as business conditions evolve.

Overcoming Challenges in Scaling AI Data Visualization on Snowflake

Scaling AI data visualization introduces technical and strategic barriers that can derail even well-planned initiatives. Poor data quality, unpredictable infrastructure spend, and cross-environment integration challenges represent the most common obstacles that organizations face as they expand AI capabilities across their enterprise.

Poor data quality can be mitigated through built-in processes and governance within Snowflake, reducing errors and enabling more reliable insights. Data quality issues compound as scale increases—a small percentage of errors in a single dataset becomes a significant problem when that dataset feeds dozens of AI models. Implementing automated validation rules, establishing clear data ownership, and creating feedback loops that surface quality issues to source system owners are essential practices.

Infrastructure costs become less predictable as AI workloads scale. Complex queries, large-scale model training, and real-time inference all consume compute resources at varying rates. Snowflake Openflow and AI-driven recommendations for query efficiency and spend management help organizations optimize resource allocation. These tools provide visibility into which workloads drive costs, identify opportunities for query optimization, and suggest configuration changes that improve price-performance ratios.

Maintaining data governance becomes more challenging as more users gain access to AI-powered analytics. Organizations must balance democratization with control, ensuring that sensitive data remains protected while enabling broad access to non-sensitive insights. Role-based access controls, data masking, and audit logging provide the necessary guardrails without creating friction for legitimate use cases.

Successful scaling requires a checklist approach:

- Establish data quality metrics and monitor them continuously

- Implement cost allocation tags to track spending by department or use case

- Create a governance framework that defines roles, responsibilities, and approval workflows

- Invest in training programs that build AI literacy across the organization

- Deploy monitoring tools that surface performance issues before they impact users

- Build redundancy into critical data pipelines to ensure availability

- Document best practices and share them across teams to accelerate adoption

Organizations that address these challenges proactively create a foundation for sustainable growth in AI capabilities. Those that ignore them often hit scaling bottlenecks that limit the business value they can extract from their data investments.

Strategic Benefits of AI Data Visualization in Enterprise Environments

Decision velocity—the speed at which organizations can evaluate options and commit to action—directly impacts business growth, market responsiveness, and the ability to experiment iteratively. Organizations with AI-enabled visualization outperform peers in time to insight and overall decision quality, creating competitive advantages that compound over time.

The strategic benefits extend across multiple dimensions:

Speed and Agility

- Faster reaction to market shifts enables proactive rather than reactive strategies

- Reduced time between hypothesis and validation accelerates innovation cycles

- Real-time monitoring allows immediate course correction when performance deviates from expectations

Empowerment and Productivity

- Non-technical staff gain independence in exploring data and answering their own questions

- Data teams shift focus from report generation to strategic analysis and model development

- Executives access insights directly rather than waiting for intermediaries to prepare summaries

Cost and Resource Optimization

- Reduction in both infrastructure and labor costs through automation and efficiency gains

- Elimination of redundant data preparation work across departments

- Lower total cost of ownership compared to traditional BI architectures

Risk Management and Compliance

- Improved forecasting accuracy reduces exposure to inventory, pricing, and capacity risks

- Consistent data definitions across the organization eliminate reporting discrepancies

- Automated audit trails support regulatory compliance and internal controls

The cumulative impact of these benefits transforms how enterprises operate. Organizations can pursue more ambitious growth strategies when they have confidence in their ability to measure results and adapt quickly. They can enter new markets with greater speed because they can analyze opportunity signals in real time. They can optimize operations continuously rather than in discrete improvement projects.

TextQL's solutions demonstrate how AI-driven analytics platforms deliver measurable business outcomes across industries. Marketing teams increase ROI by identifying high-performing channels and reallocating spend dynamically. Operations teams reduce costs by optimizing resource allocation based on predictive demand models. Finance teams improve forecast accuracy by incorporating a broader range of signals into their models.

Best Practices for Maximizing AI Data Visualization Impact in Snowflake

Analytics leaders must balance multiple priorities as they scale AI data visualization capabilities: ensuring robust governance, managing costs, driving adoption, and maintaining alignment with business objectives. A practical roadmap helps organizations navigate these competing demands while maximizing return on their data investments.

Ensure Robust Data Governance Establish clear policies for data access, quality standards, and lifecycle management. Define ownership for each dataset and create accountability for maintaining accuracy and completeness. Implement automated controls that enforce policies consistently across all users and use cases. Data readiness for Snowflake Intelligence requires semantic modeling that captures business context and relationships.

Prioritize Data Readiness Invest in the foundational work required to make data AI-ready before deploying advanced analytics. This includes standardizing schemas, enriching metadata, and documenting business logic. Organizations that skip this step often encounter accuracy problems that undermine user trust and limit adoption.

Invest in Semantic Modeling Create a business-friendly layer that translates technical data structures into concepts that users understand. Semantic models define metrics consistently, establish relationships between entities, and encode business rules that ensure calculations are correct. This investment pays dividends by reducing confusion and enabling self-service analytics.

Align Analytics Workloads to Business Value Not all analytics use cases deliver equal value. Focus initial efforts on high-impact areas where insights directly influence revenue, cost, or risk. Use pilot projects to demonstrate value quickly, then expand to adjacent use cases as capabilities mature.

Monitor Cost and Usage Metrics Utilize built-in platform tools for ongoing optimization. Track spending by department, use case, and user to identify opportunities for efficiency improvements. Set budget alerts that notify stakeholders before costs exceed thresholds. Review resource allocation regularly and adjust configurations based on actual usage patterns.

Foster a Data-Curious Culture Technical capabilities alone do not drive adoption—organizational culture plays an equally important role. Provide training that builds confidence in using AI-powered tools. Celebrate successes when teams use data to make better decisions. Create communities of practice where users can share tips and learn from each other.

Iterate Continuously AI agents can continuously surface new insights as data accumulates and business conditions change. Establish processes for reviewing these insights regularly and incorporating them into decision workflows. Encourage experimentation and accept that not every hypothesis will prove correct.

Implementation Strategy Checklist:

- Assess current data quality and identify gaps

- Define governance policies and assign ownership

- Build semantic models for priority business domains

- Deploy AI agents for high-value use cases

- Train users and establish support channels

- Monitor adoption metrics and gather feedback

- Optimize costs based on usage patterns

- Expand capabilities to adjacent use cases

- Measure business impact and communicate results

- Refine approach based on lessons learned

Organizations that follow this structured approach create sustainable AI analytics programs that deliver increasing value over time. Those that rush deployment without adequate preparation often struggle with adoption and fail to realize the full potential of their investments.

Frequently Asked Questions

How does AI data visualization accelerate enterprise decision-making with Snowflake?

AI-powered data visualization in Snowflake empowers users to interact directly with data through natural language queries, generate instant insights from complex datasets, and respond quickly to changing business needs without relying on specialized technical teams. The platform's embedded AI agents automatically detect patterns, flag anomalies, and generate visual summaries that translate raw data into actionable intelligence. This eliminates the traditional bottleneck where business users must wait for data teams to build custom reports or dashboards.

What are the key benefits of AI-powered data visualization in Snowflake?

Key benefits include faster time to insight through automated analysis, improved forecasting accuracy using predictive models, greater productivity as non-technical users gain self-service capabilities, and automated trend identification that translates data investments into actionable business outcomes. Organizations also experience reduced infrastructure costs by eliminating data movement between systems, enhanced collaboration through shared access to trusted datasets, and improved decision quality through more comprehensive analysis of business context.

How do natural language queries simplify AI-driven analytics?

Natural language queries allow users to ask business questions in plain language—such as "Which products have the highest return rates this quarter?"—making analytics accessible to non-technical staff and speeding up the exploration and interpretation of data. The AI system interprets intent, translates the question into executable queries, validates assumptions, and presents results in intuitive visual formats. This conversational interface eliminates the need to learn SQL or understand database schemas, dramatically expanding who can participate in data-driven decision-making.

What tools integrate best with Snowflake for AI data visualization?

Snowflake natively supports AI-driven tools through its Cortex AI platform and integrates seamlessly with leading visualization platforms including Tableau and enterprise business intelligence solutions. The platform's open architecture supports custom integrations through APIs and connectors, enabling organizations to leverage existing tool investments while adding AI capabilities. TextQL's Snowflake integration provides autonomous AI agents that deliver advanced insights directly within the Snowflake environment.

How can organizations ensure data security and governance in AI analytics?

Organizations can rely on Snowflake's robust security features, including role-based access controls that limit data visibility to authorized users, comprehensive audit logging that tracks all data access and query activity, data masking capabilities that protect sensitive information while enabling analysis, and encryption both at rest and in transit. The platform's governance framework allows administrators to define policies centrally and enforce them consistently across all users and use cases, ensuring that AI-powered analytics remain trusted and compliant with regulatory requirements.